In recent years, Hadoop has become one of the most popular platforms for big data projects. Hadoop is an open-source platform that allows for the distributed processing of large data sets across a cluster of commodity servers.

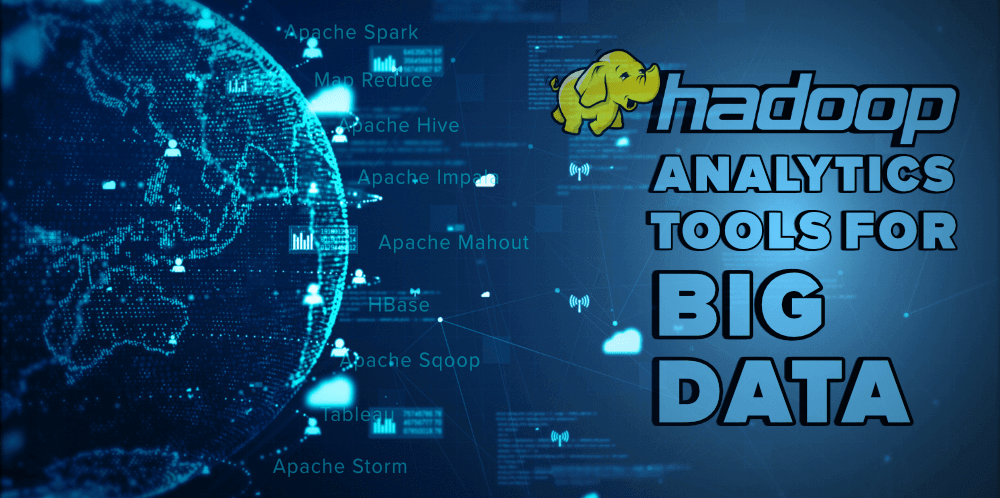

There are a number of different tools that can be used for Hadoop analytics. In this article, we will take a look at the top 10 Hadoop analytics tools that are expected to be used in big data projects.

Data is increasingly becoming more complex and difficult to manage. As a result, Hadoop has become a popular tool for managing and processing big data. Here are the top 10 Hadoop analytics tools used in big data projects.

|

| Hadoop Analytics Tools for Big Data |

"10 Hadoop Analytics Tools That Will Help You"

If you want to stay ahead of the competition in the world of big data, you need to know about Hadoop analytics tools. These tools can help you make sense of large amounts of data quickly and easily. In this article, we will introduce you to 10 Hadoop analytics tools that will be useful for your big data projects.

Hadoop is an open-source software framework for storing and processing big data. It has been around for over a decade and is used by many large organizations, such as Facebook, Twitter, and LinkedIn.

The rise of big data has led to a demand for Hadoop analytics tools that can help organizations make sense of their data. There are many different types of Hadoop analytics tools available, each with its own strengths and weaknesses.

Top 10 Hadoop Analytics Tools For Big Data

Hadoop is an Apache open source framework that is designed for distributed storage and processing of big data sets across computer clusters. Hadoop is not a single tool but rather a platform that consists of many related tools. Some of these tools are designed for specific tasks while others can be used for a variety of purposes.

1. Apache Spark:

With the ever-growing popularity of big data, the need for efficient and reliable tools to process this data is more important than ever. Apache Spark is one of these tools, and it is becoming increasingly popular for its speed and flexibility.

Spark was originally developed at UC Berkeley in 2009, and it has been open source since 2010. The project was launched in 2014 with the goal of making Spark easier to use and more accessible to a wider audience.

Spark has many features that make it well suited for big data applications, including support for multiple programming languages, in-memory processing, and streaming data.

Despite its young age, Apache Spark has already seen widespread adoption, with users including Facebook, Yahoo, and eBay.

2. MapReduce:

With the ever-growing popularity of big data, the demand for reliable and efficient data processing tools has never been higher. MapReduce is one such tool, designed to handle large scale data processing by breaking it down into smaller, more manageable chunks. In this article, we'll take a closer look at what MapReduce is and how it can be used to make working with big data a breeze.

3. Apache Impala:

There are many open source SQL engines available to choose from, each with their own benefits. In this article, we'll be discussing Apache Impala, a high performance engine ideal for interactive and ad-hoc queries. Impala is based on technology originally developed by Google and is now an integral part of the Hadoop ecosystem.

4. Apache Hive:

In recent years, Apache Hive has become a popular tool for data warehousing. Apache Hive is a data warehouse system for storing and querying large amounts of data. The advantage of using Apache Hive is that it can be used to query data stored in HDFS, HBase, and other data sources. In this article, we will discuss the features of Apache Hive and how it can be used to query data.

Hive is a data warehouse software that enables querying and managing large data sets residing in the Hadoop file system. It provides an SQL-like interface to Hadoop and can be used to query data stored in any Hadoop-compatible file system such as HDFS, Amazon S3, Alluxio, etc.

5. Apache Mahout:

Big data is becoming more and more popular, and with that comes a need for better tools to process it. Apache Mahout is one such tool, designed to help with the machine learning aspects of big data processing. In this article, we'll take a look at what Apache Mahout is and how it can be used.

If you're looking for a tool to help with data mining, Apache Mahout might be just what you need. In this article, we'll take a look at what Apache Mahout is and how it can be used. We'll also explore some of the benefits and drawbacks of using this tool.

In recent years, the use of social media has become more prevalent in society. While there are many benefits to using this tool, there are also some drawbacks.

Some of the benefits of social media include staying connected with friends and family, sharing photos and experiences, and staying up-to-date on current events. However, there are also some drawbacks to using social media. These can include addiction, cyberbullying, and privacy concerns.

6. Apache Pig:

In Big Data analysis, Apache Pig is a platform that is used for analyzing large data sets. It is a high-level language that is used for expressing data analysis programs. Apache Pig has a component called Pig Latin, which is used to write the programs. The language has been designed to be easy to learn for those who are new to coding as well as being able to handle complex tasks for experienced developers.

7. HBase:

HBase is a powerful open-source, column-oriented database management system that runs on top of the Hadoop Distributed File System (HDFS). It was originally developed as a part of the Apache Hadoop project and is now a top-level Apache project in its own right.

HBase is designed to provide quick random access to large amounts of data stored in HDFS. It does this by indexing data by row key and column family, allowing for fast lookup and retrieval of data. HBase also supports major features such as versioning, atomic writes, and Bloom filters.

While HBase can be used as a stand-alone database, it is often used in conjunction with other tools in the Hadoop ecosystem such as Hive and Pig. This allows for the complex processing of large data sets using a variety of different techniques.

8. Apache Sqoop:

In recent years, big data has become increasingly important to businesses and organizations across the globe. Apache Sqoop is a tool designed to transfer data between Hadoop and relational databases. In this article, we will take a closer look at what Apache Sqoop is, how it works, Sqoop is a tool designed for efficiently transferring data between Apache Hadoop and relational databases. Sqoop can be used to import data from a database into HDFS, as well as to export data from HDFS back into a database.

9. Apache Storm:

In recent years, Apache Storm has gained a lot of popularity as a tool for processing real-time data. In this article, we'll take a look at what Apache Storm is and how it works. We'll also discuss some of the key features that make it an attractive choice for processing real-time data.

10. Apache flume:

Apache Flume is a data collection service for Hadoop. It is designed to provide a simple, reliable, and efficient means for collecting and transferring large amounts of data. Flume is highly configurable and extensible, with a rich set of features.